|

Fudan University Google Nuro Tencent

|

|

Pixel2Mesh Pipeline.

|

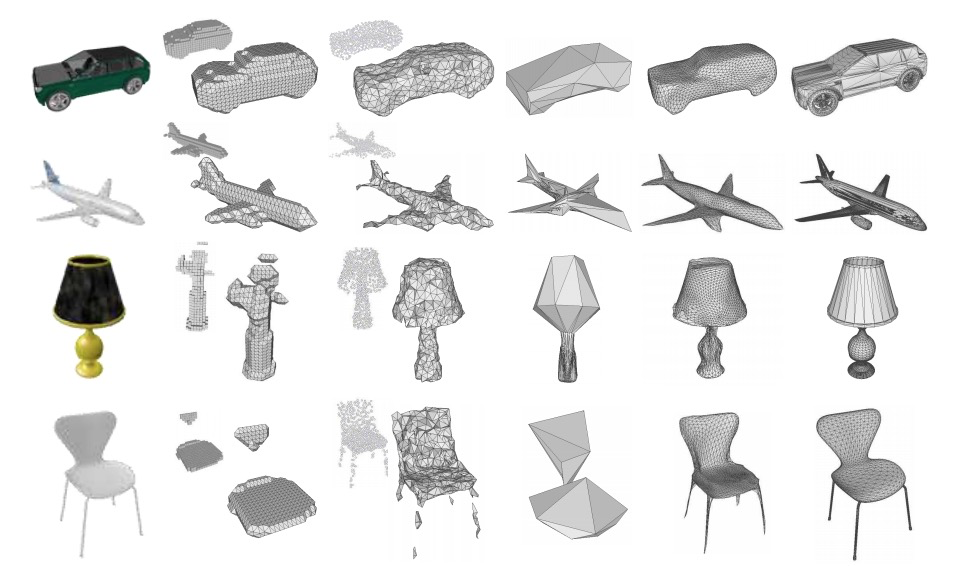

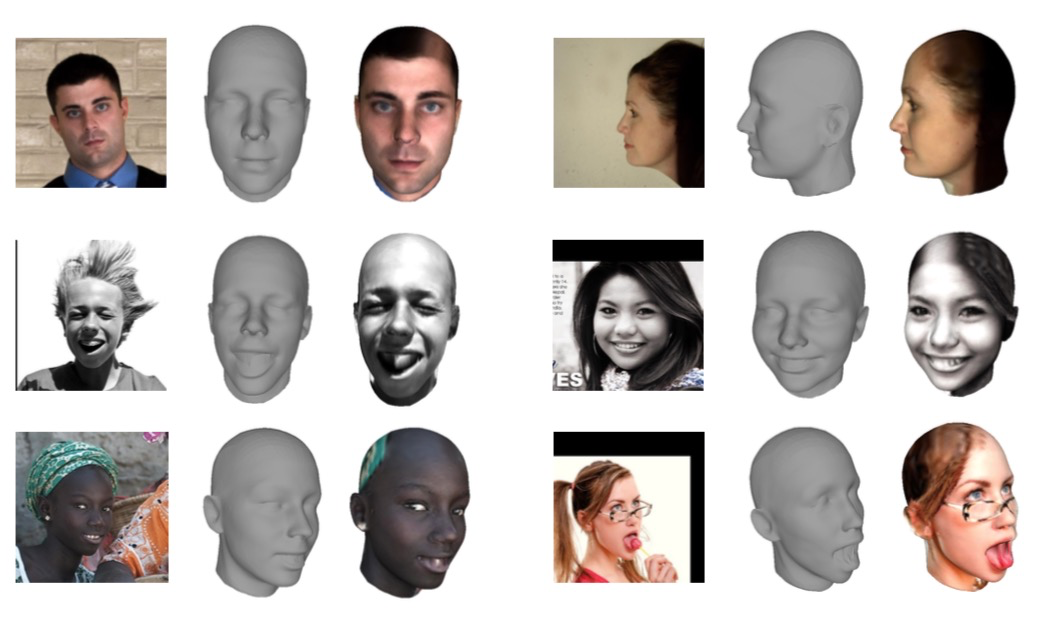

We propose an end-to-end deep learning architecture that produces a 3D shape in triangular mesh from a single color image. Limited by the nature of deep neural network, previous methods usually represent a 3D shape in volume or point cloud, and it is non-trivial to convert them to the more ready-to-use mesh model. Unlike the existing methods, our network represents 3D mesh in a graph-based convolutional neural network and produces correct geometry by progressively deforming an ellipsoid, leveraging perceptual features extracted from the input image. We adopt a coarse-to-fine strategy to make the whole deformation procedure stable, and define various of mesh related losses to capture properties of different levels to guarantee visually appealing and physically accurate 3D geometry. Extensive experiments show that our method not only qualitatively produces mesh model with better details, but also achieves higher 3D shape estimation accuracy compared to the state-of-the-art.

|

N. Wang, Y. Zhang, Z. Li, Y. Fu, W. Liu, Y. Jiang

Pixel2Mesh: Generating 3D Mesh Models from Single RGB Images. ECCV, 2018. TPAMI.2020.2984232. [Paper(ECCV)] [Paper(TPAMI)] [GitHub] |

|

|

|

Results on ShapeNet objects.

|

Results on AFLW2000-3D dataset.

|

Acknowledgements

This work was supported in part by NSFC Projects (U1611461, 61702108), Science and Technology Commission of Shanghai Municipality Projects (19511120700, 19ZR1471800, 19511132000), Shanghai Research and Innovation Functional Program (17DZ2260900), Shanghai Municipal Science and Technology Major Project (2018SHZDZX01), and Eastern Scholar (TP2017006). The websiteis

modified from this template.

|